Why Data Teams Will Become the Next Power Centre in AI-Driven Companies

For most of the digital era, data inside large organisations was treated as a background utility. It powered reports, informed strategy decks, and occasionally produced insights that executives could point to as evidence that the business was becoming more sophisticated. Data teams were important, but rarely central. Their work existed to support others, not to define the boundaries of what the organisation could safely do.

Even as companies talked about becoming “data-driven,” the role of the data team was largely infrastructural. They were expected to build pipelines, maintain warehouses, and ensure dashboards were refreshed on time. Their success was measured by availability and speed, not by judgment or restraint. If more data could be made accessible, that was usually seen as progress rather than risk.

That position has changed, quietly but decisively.

As AI systems move from pilots into live operations, internal data has become something far more exposed. It is no longer analysed periodically or reviewed at a distance. It is consumed continuously by systems that automate decisions, recommend actions, and increasingly act without human supervision. Data that once informed humans now instructs machines, and those machines operate at a scale and speed that magnifies even small errors.

When something goes wrong, the impact is immediate and external, showing up as a privacy breach, a regulatory inquiry, a leaked dataset, or an outcome the organisation is suddenly required to explain. The feedback loop between data quality and real-world consequences has tightened dramatically, leaving far less room for ambiguity or delay.

Inside companies, responsibility has shifted upstream toward the people who manage data flows. That shift has not been accompanied by formal authority, budget, or organisational redesign, but it has altered where accountability now lands when AI systems fail. Data teams increasingly find themselves answering questions that were never part of their original remit, not because they sought that role, but because they are the only ones close enough to the evidence.

The risk was always internal. AI simply made it visible.

From a security perspective, the core issue is not that organisations suddenly became careless with data, but that the assumptions underlying enterprise security no longer match how data is used.

“Data and data teams are not the problem in an organisation,” said Kalle Bjorn, Senior Director of Systems Engineering at Fortinet. “The problem is that data now flows freely across users, endpoints, cloud applications, generative AI tools, and hybrid workspaces in ways that traditional defences were never designed to observe or control.”

Most corporate security architectures were built around the idea of a perimeter, with controls designed to detect and block unauthorised access from the outside. That model presumes that what happens internally is broadly legitimate and manageable through policy. AI-era data usage breaks that presumption entirely.

Employees now interact with sensitive information through a growing number of tools that sit outside traditional monitoring systems. Contractors move between environments with different access rules. Data flows into third-party AI platforms that were never part of the original security design. Each individual action may appear harmless, but collectively they create exposure that is difficult to see, let alone control.

“In insider-driven incidents, risk is behavioural and contextual,” Bjorn said. “It’s embedded in everyday work, which is why most organisations struggle to detect it early. The majority of the incidents we see are not malicious. They come from employees or contractors taking ordinary actions without realising the exposure they’re creating.”

The scale of the problem is no longer marginal. Fortinet’s Insider Risk Report found that 77% of organisations experienced insider-driven data loss over 18 months. In 41% of cases, the most significant incident resulted in financial losses between $1 million and $10 million. Only 16% involved confirmed malicious intent, underscoring how poorly current controls align with real workplace behaviour.

AI amplifies these weaknesses rather than correcting them, because it increases both the volume and velocity of data usage while reducing human oversight.

“Generative AI expands the attack surface significantly,” Bjorn said. “You now have data poisoning, adversarial attacks, and very limited visibility into how AI tools are actually being used across the organisation. Most companies are still reacting after the fact instead of having the controls in place to manage this proactively.”

When data stopped sitting still

For Mena Migally, Regional Vice President, EMEA East at Veeam, the bigger change lies in how AI collapses the distance that once existed between data and decision-making.

“For years, organisations treated data growth almost as a badge of progress,” he said. “They collected everything, stored it everywhere, and gave access far more freely than they realised, because the assumption was that more data would eventually translate into more insight.”

That assumption held as long as data remained something humans reviewed and interpreted at their own pace. AI changes that relationship entirely by making data an active input into automated systems.

“AI systems feed directly on internal data all the time,” Migally said. “Training, retraining, inference, and automation are happening in parallel, which means there is no longer a clean separation between managing data and influencing outcomes.”

Once that separation disappears, data becomes operational infrastructure rather than a passive resource. Decisions are no longer downstream of analysis. They are embedded within it.

“Every dataset becomes an input into a decision-making system,” he said. “Every weakness in classification, access control, or lineage becomes a weakness in how AI behaves, and that behaviour has real consequences.”

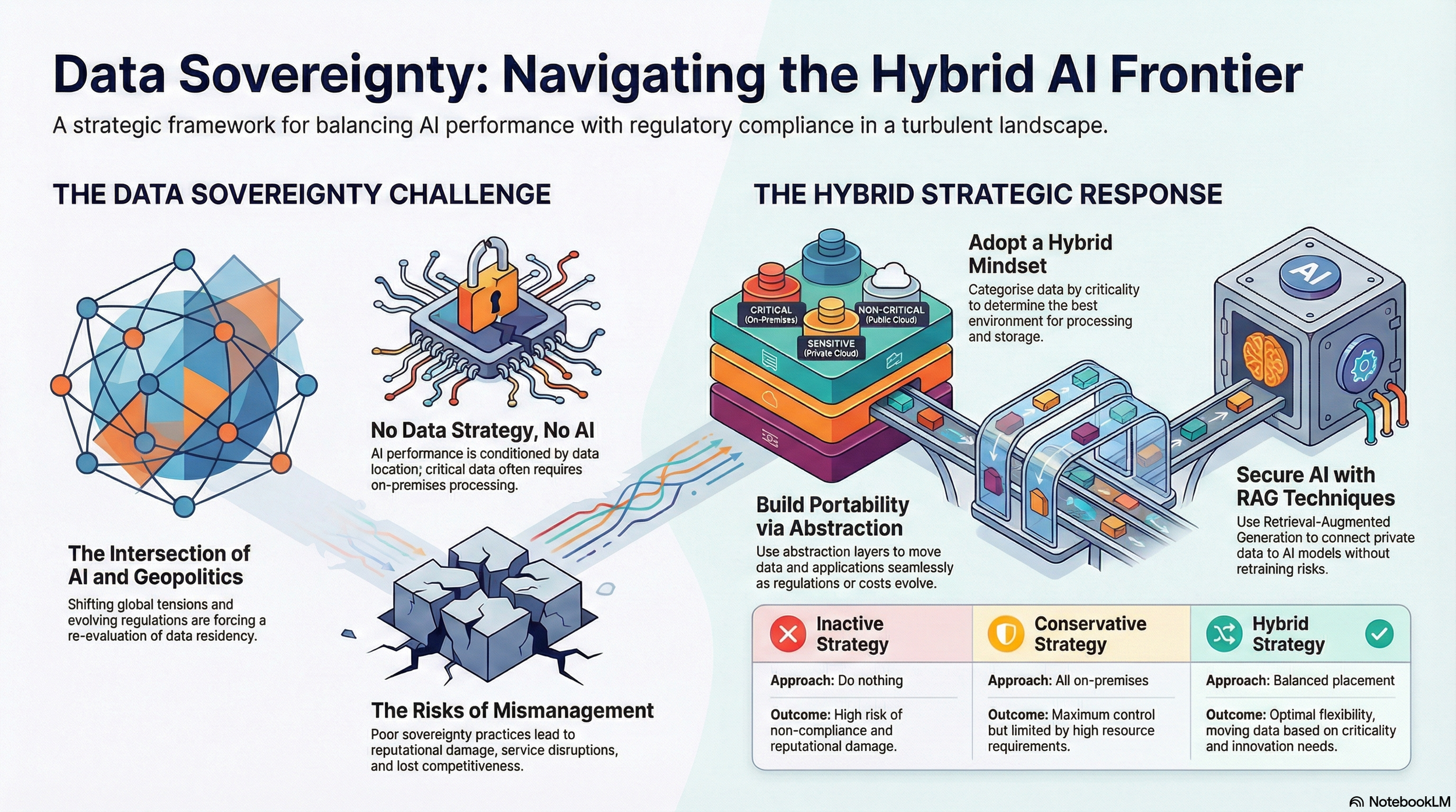

Those consequences extend beyond technical failure. They surface as regulatory exposure, reputational damage, and loss of trust, often in jurisdictions where expectations around data sovereignty and accountability are tightening rapidly.

This is why data teams are being pulled into conversations that previously belonged elsewhere.

“Data teams are no longer just enabling insight,” Migally said. “They are now responsible for whether AI systems are compliant, secure, and accountable, even when they do not formally own those outcomes.”

Risk does not arrive once. It accumulates across the system

One of the reasons organisations struggle to manage AI exposure is that risk does not enter at a single, identifiable moment.

“It doesn’t arrive only at collection, or only at training, or only at deployment,” Migally said. “It exists across the entire lifecycle, which makes isolated controls ineffective.”

Risk accumulates quietly as data moves, is transformed, copied, combined, and reused across systems that were never designed to support that level of traceability.

He points to three structural gaps that appear repeatedly. The first is visibility, with organisations lacking a clear understanding of where data resides, who can access it, and how it moves across systems and jurisdictions. The second is trust, as AI systems are deployed faster than organisations can define what failure looks like or who is accountable when it occurs. The third is resilience, as attackers increasingly target data pipelines, models, and automation layers rather than traditional infrastructure.

“Ownership cannot remain fragmented under those conditions,” Migally said. “Data teams are often the only function that can see the lifecycle end to end, from ingestion through transformation to recovery.”

That visibility, however, brings a different kind of pressure, particularly when something breaks and the organisation needs answers quickly.

From support function to operational choke point

As AI systems begin to influence real business outcomes, the role of the data team shifts in ways that are rarely formalised.

“What we are seeing very clearly is that AI has moved beyond experimentation,” said Levent Ergin, Chief Strategist for Climate, Sustainability and AI, Informatica. “Organisations are no longer asking whether AI works in theory. They are asking whether it can operate safely, reliably, and responsibly inside core business processes.” That question has become a brake on deployment, particularly in regulated industries where explainability and auditability are not optional.

“Our research shows that many organisations are experimenting with agentic AI,” Ergin said. “Far fewer are taking those systems into production, and the limiting factor is not the availability of models or tools. It’s whether leadership is willing to stand behind the data pipelines those systems depend on.”

In practice, this means data teams increasingly decide what proceeds and what stalls, often without explicit authority or visibility at board level.

“When AI influences decisions, data teams are not just supplying inputs anymore,” Ergin said. “They are underpinning operational confidence, because without trusted, governed data, AI simply cannot be operationalised at scale.”

Much of the external debate around AI risk focuses on models and algorithms. Inside organisations, scrutiny is shifting toward the provenance, treatment, and governance of data itself.

“Explainability is often described as a model problem,” Ergin said. “In reality, it’s a data problem. Confidence comes from being able to trace where data came from, how it was transformed, and why a system produced a particular outcome.”

Responsibility without alignment

When something goes wrong, organisations look for evidence rather than intention.

“When regulators ask what happened, answers have to be provable,” Migally said. “They can’t be reconstructed after the fact from partial logs or individual recollections.”

Yet responsibility for insider risk remains scattered. Fortinet’s research shows that 38% of organisations place it in security operations, 26% in data protection, 12% in dedicated insider risk groups, with the remainder split across IT, enterprise risk, or not assigned at all.

“That fragmentation creates blind spots,” Bjorn said. “Effective insider risk management requires governance that cuts across teams, with senior leadership owning both visibility and funding.”

Without that alignment, data teams often absorb accountability without control, expected to explain failures while lacking the mandate to prevent them upstream.

A shift happening without ceremony

No organisation formally decided that data teams should become central to risk and accountability. There was no announcement, no reorganisation, no explicit transfer of authority.

The change unfolded gradually as AI systems moved closer to customers, regulators, and revenue, and as tolerance for ambiguity narrowed. Decisions that once passed quietly through analytics teams now attract scrutiny. Controls that once felt bureaucratic now determine whether systems can operate at all.

In many organisations, the most consequential AI decisions are not made in innovation labs or executive offsites. They are made inside data pipelines, through choices about access, classification, lineage, retention, and deletion that rarely attract attention unless something breaks.

That is how power often moves inside complex organisations: not through declarations, but through proximity to risk, and through who is left holding the evidence when explanations are demanded.