The privacy wall: how local data laws are reshaping where AI is trained and served

The conversation usually began the same way. A company would sit down with an AI roadmap that assumed data could move where compute was cheapest, models could be trained once and deployed everywhere, and compliance could be handled after the fact. Then a lawyer, a regulator, or a government customer would ask a question that cut through the optimism: where would the data sit, and under whose laws would the AI actually operate.

Across Europe, India, and the Gulf, that question stopped being an edge case and started to determine whether projects moved forward at all. Privacy and data localisation rules that had existed for years, often ignored or quietly worked around, moved directly into architecture discussions, procurement reviews, and board-level risk conversations. AI did not stop advancing, but it stopped moving freely. What took shape was a privacy wall, not designed to block AI adoption, but to keep data and AI services inside regional legal boundaries where authority and enforcement were clear.

This wall did not emerge suddenly. It built up as organisations realised that shortcuts taken during earlier cloud migrations were becoming liabilities once AI systems began producing decisions rather than reports. Systems designed for scale and efficiency now had to answer questions about jurisdiction, auditability, and legal reach, questions that could no longer be deferred.

Mena Migally, Regional Vice President EMEA East at Veeam, explained that what was happening inside enterprises was not hesitation but reorganisation. “What we were seeing was not a slowdown of AI,” he said. “It was a restructuring of AI architecture. Countries were making it clear that data, inference workloads, encryption keys, and routing had to stay inside defined geographic boundaries.” For many organisations, that meant reopening decisions made years earlier, when global reach mattered more than regulatory clarity.

Farid Najjar of NetApp described encountering the same pressure from inside enterprise deployments. “What changed was not the technology,” he said. “What changed was the tolerance for legal ambiguity. Once AI systems touched regulated data, architecture decisions stopped being optional.” Projects that once assumed centralised training and global inference began to fracture into regional pipelines, each constrained by local law.

When AI changed the nature of data

The pressure intensified as AI moved from experimentation into production. Data stopped being something organisations stored for records, analytics, or compliance and became something that actively shaped outcomes in real time. Models moved from advisory tools to operational systems embedded inside workflows that affected customers, citizens, and markets.

Fred Lherault, CTO EMEA/Emerging at Pure Storage described this shift as a fundamental change in how data behaved. “Data was no longer just stored and reported on,” Lherault said. “It was continuously fed into models that generated predictions, decisions, and automated actions.” Once data entered that loop, the distance between data governance and consequence collapsed, because errors or outages were no longer abstract risks but immediate operational failures.

That reality became tangible inside large enterprises already running at scale. In one multinational bank operating across Europe and the Gulf, internal teams found that an AI-driven fraud detection system trained on pooled regional data could no longer be legally updated using the same datasets once customer data crossed into new regulatory classifications. Engineers were forced to split the training pipeline by jurisdiction, duplicating infrastructure and slowing iteration, not because the model underperformed, but because legal exposure outweighed marginal performance gains. What had started as a technical optimisation problem became a governance constraint embedded directly into the model lifecycle.

Najjar connected that shift to why earlier compromises no longer held. “AI forced companies to confront something they had postponed,” he said. “They realised that if data could not move legally, then AI could not move architecturally. That was a hard reset for a lot of strategies.” In many cases, this surfaced during training, when models required access to historical datasets spread across jurisdictions that no longer allowed free movement.

Lherault added that accountability now follows data by default. “Its location could no longer be separated from responsibility,”he said, explaining that once AI systems produced decisions rather than insights, governance attached itself to infrastructure choices rather than policy statements.

What the numbers showed

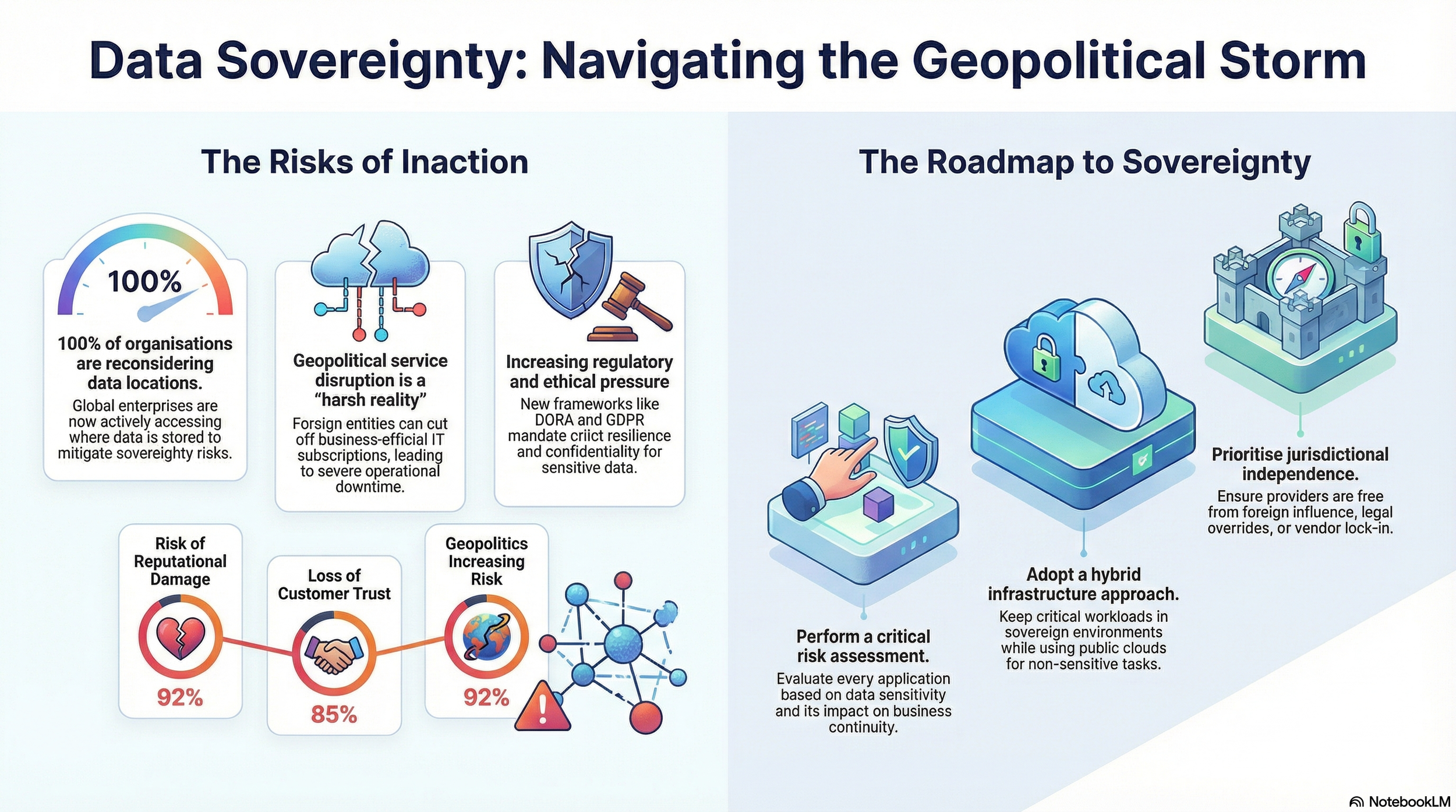

The scale of the shift became clearer in the data. In Pure Storage’s survey of enterprises across nine countries in Europe and Asia-Pacific, 100% of respondents said data sovereignty risks had already forced them to reconsider where their data was stored or processed, while 92% linked weak sovereignty planning to reputational damage and 85% connected it directly to loss of customer trust. These figures reflected operational decisions already taken, not preparations for a distant regulatory future.

The same research showed how deeply geopolitical uncertainty had entered technology planning. Close to 92% of respondents believed geopolitics had increased the risks of ignoring data sovereignty, and nearly one-third identified foreign government access and surveillance as material threats, rather than abstract concerns. These concerns appeared consistently across sectors, from financial services and healthcare to energy and public infrastructure.

Lherault said the numbers matched what leadership teams were experiencing internally. “Boards were no longer asking whether sovereignty mattered,” he said. “They were asking how quickly systems could be redesigned to avoid disruption.” In that environment, localisation became less about regulatory compliance and more about operational resilience.

Alessandro Liotta of Fortinet connected those findings to government behaviour. “Once AI entered critical services, tolerance for jurisdictional uncertainty collapsed,” he said. “Trust, enforcement, and resilience became inseparable.” From a security perspective, fragmented oversight created exposure that governments were no longer willing to accept.

Regulation moved into architecture

Regulators responded unevenly, but decisively. GDPR had already complicated cross-border data transfers in Europe, but newer rules pushed deeper into system design. The EU AI Act tied accountability, auditability, and risk classification directly to how models were built and operated. India’s Digital Personal Data Protection Act reinforced domestic responsibility for how personal data was collected and used. Across the Gulf, particularly in Saudi Arabia and the UAE, residency requirements increasingly governed how AI systems interacted with sensitive data in public services, finance, defence, and critical infrastructure.

In India, this shift played out alongside a broader push to build domestic digital infrastructure. Policymakers balanced ambitions around AI adoption with political sensitivity around citizen data, leading enterprises to assume from the outset that consumer and government data would need to remain in-country. That assumption filtered directly into how AI systems were designed, from where data lakes were built to how vendors structured managed services. In the Gulf, a similar logic applied, but with greater emphasis on national resilience and strategic autonomy, particularly in sectors tied to energy, finance, and state services.

Migally said these developments reshaped commercial reality for global providers. “There was no longer a one-size-fits-all AI deployment model,” he said. “Global vendors had to localise their services, build regional AI stacks, or partner with local cloud and infrastructure providers who already met regional requirements.”

Law, in effect, began to dictate architecture rather than reacting to it.

Cloud providers had adapted once before by creating regional availability zones and sovereign offerings, but AI forced the same reckoning at a deeper level. Training data, model weights, and inference pipelines proved harder to separate cleanly across jurisdictions than storage alone had ever been.

Liotta reinforced the regulatory logic behind that shift. “Governments were trying to ensure enforceability,” he said. “If data and AI systems operated outside legal reach, regulation meant nothing.”

Oversight only worked if systems were designed to remain within it.

Why local clouds kept winning deals

As regulatory pressure translated into architectural constraints, cloud markets adjusted. Local and regional providers gained relevance not because they outperformed hyperscalers technically, but because they operated inside the same legal systems as their customers, reducing uncertainty during audits, investigations, and incident response.

Najjar said this alignment reshaped procurement decisions. “Local meant keeping the data, and often the compute that operated on it, within that legal territory,” he said. “It was about matching AI pipelines to legal geography, not just physical geography.” For regulated organisations, that alignment simplified accountability when systems failed or were challenged.

Pure Storage’s data reflected that behavioural change. Close to 78% of organisations reported adopting strategies that combined multiple service providers, sovereign data centres, and governance requirements embedded directly into commercial agreements, signalling a move away from reliance on a single global cloud.

Lherault said this showed intent rather than retreat. “Organisations were not abandoning AI.” He added, “They were rebuilding it in environments they could govern.” The goal was not isolation, but proper regulation.

Sovereignty as enforcement

For policymakers, the privacy wall reflected a problem of enforceability rather than isolation. Liotta said governments were responding to over-reliance on foreign cloud providers, loss of control over data subject to outside jurisdictions, and difficulty enforcing domestic law against providers based elsewhere. “AI amplified all three,” he said, “because it concentrated decision-making power into systems that operated faster than legal remedies.”

Migally said the redistribution of power was unavoidable. “Sovereignty reshaped where power sat, who controlled data, and how accountability was enforced,” he said, pointing to ongoing collaboration between regulators and technology providers in the Gulf aimed at containing risk without freezing innovation in critical services.

Najjar said enterprises had largely accepted that adjustment. “The era of borderless AI ended quietly,” he said. “What replaced it was not restriction, but responsibility.” What followed was a more bounded model of AI deployment, shaped less by efficiency and more by trust, jurisdiction, and enforceability.