Seven Models, No Control Plane: The Inference Problem Enterprises Created for Themselves

For most of the past three years, enterprise AI strategy has been organised around a single question: which model? The evaluations, the benchmarks, the proof-of-concept cycles, all of it built on the assumption that choosing the right foundation model was the decision that would determine whether an AI programme delivered value or not.

The 2026 F5 State of Application Strategy Report, produced from surveys of more than 1,100 IT decision makers globally, makes a quietly devastating case that this framing has been wrong from the start. The model is not the point. Inference is. And most enterprises are running inference at scale without the architecture to govern it.

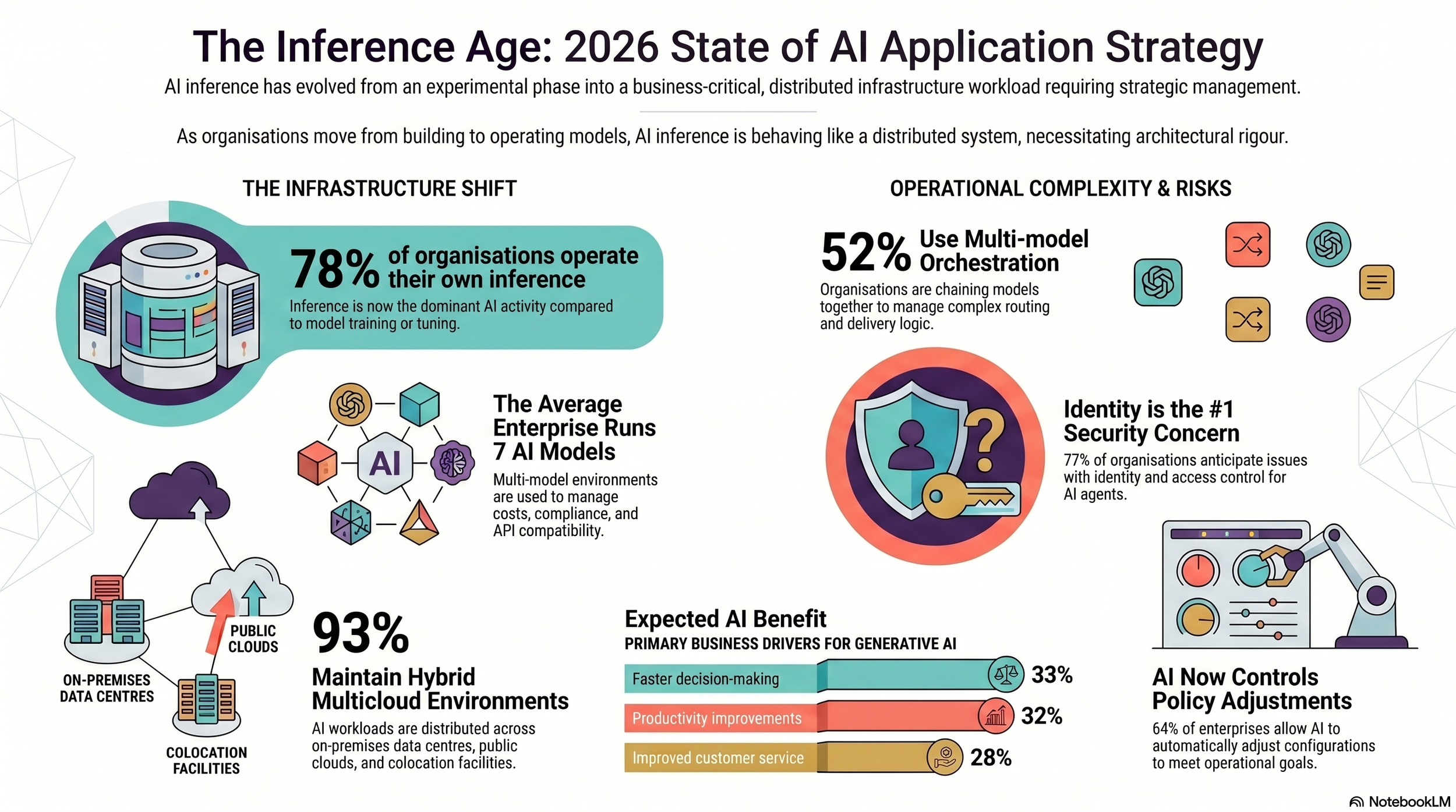

The numbers that establish this are not ambiguous. 78% of organisations are operating their own inference services. Close to 77% report inference as their dominant AI activity, rather than training or fine-tuning. Organisations are simultaneously running or actively evaluating an average of seven AI models in production.

Taken together, these figures describe an industry that has crossed a threshold it has yet to fully acknowledge. AI inference has moved from experimentation to operational infrastructure, embedded in production systems, daily decision loops, and the delivery pipelines for applications and services that businesses depend on. The transition happened faster than the governance frameworks could manage.

Seven models is where the problem begins

The seven-model average is the figure that unlocks everything else in the report. The instinct is to read it as a sign of organisational disorder, competing teams, lack of strategic coherence. The data says otherwise. When respondents explained why their organisations use multiple model families, 90% cited technical reasons including API compatibility, availability, and failover. 79% cited business and strategic considerations including cost optimisation, compliance requirements, and data sovereignty. User preference, the explanation consistent with chaos, barely registered at 5%.

Multi-model AI has arrived at the same place as hybrid multicloud for the same reasons: the business demands it. Different models carry different cost structures, operate under different regulatory constraints, expose different interfaces, and degrade under load in different ways. No single model satisfies every requirement across a modern enterprise operating across multiple markets and jurisdictions. These are architectural decisions made under real commercial and legal pressure, and they have a structural consequence that most organisations have not yet fully absorbed.

Once an enterprise runs several models in parallel, inference stops behaving like a single managed endpoint. It starts behaving like a distributed system. The same routing logic, policy enforcement, cost governance, fallback conditions, and observability requirements that distributed systems have always demanded now apply to the AI layer. New operational responsibilities emerge that did not exist before: model-aware authentication, semantic abuse detection, token-based cost management. None of these map cleanly onto the existing remits of platform, security, or application teams. Enterprises respond by standing up new teams with their own preferred tooling. Without deliberate convergence, the complexity compounds, and the control surface fragments further.

The governance gap that is already visible in the data

The report makes that gap measurable. Only 28% of organisations have streamlined developer workflows through a single AI management point. The remaining 72% are managing inference across fragmented control surfaces. In operational terms, they are running seven production systems without unified traffic management, consistent security policy, or shared observability. They have not chosen to do this. They have arrived here incrementally, one model deployment at a time, and the cumulative effect is an inference estate that has outgrown the governance architecture around it.

The historical parallel the report draws is to the microservices transition. When monolithic applications gave way to distributed services and APIs, the same fragmentation emerged. What eventually resolved it was the deliberate construction of a management layer: load balancers, API gateways, service meshes, centralised policy engines. Organisations that built those layers early gained compounding operational advantages in reliability, cost control, and security posture. Those that allowed fragmentation to persist paid for it across all three dimensions.

AI inference is following the same path, and the critical difference is speed. The microservices transition unfolded over years. The operationalisation of AI inference, as this data documents, has happened in months. Organisations have moved into fleet management mode before the control infrastructure for fleet management exists.

The prompt layer is where value and risk concentrate

The report's findings on operational priorities reveal something important about where inference sits in the enterprise architecture. When respondents ranked where application delivery and security services have the greatest impact in AI systems, they pointed to the capabilities wrapped directly around inference itself: input filtering, prompt handling, injection prevention, and memory management. Output moderation matters, but the primary concern is what enters the model, not what leaves it.

This is not arbitrary. It follows from what enterprises actually need inference to deliver. The top two expected benefits from generative AI are faster decision-making, cited by 33% of respondents, and productivity improvements through adaptive learning, cited by 32%. These are not benefits that depend on model capability. They depend on AI being reliably embedded in operational workflows with accurate, well-governed, manipulation-resistant inputs. The organisations that realise these benefits soonest are not those with the most sophisticated models. They are those that have built the systems to ensure what reaches the model is clean and appropriately scoped. That is an inference governance problem. It is also, by another name, an application delivery and security problem.

What happens to organisations that miss this

The report's conclusion is pointed. If AI fails, stalls, or becomes prohibitively expensive at scale, it will not be because the models were insufficient. It will be because the organisations running them underestimated the operational gravity, complexity, and security risk that inference introduces when it becomes a distributed, multi-model, production workload. The primary hurdle is no longer model capability. It is architectural scalability and governance maturity.

The organisations that get ahead of this are those that have already reframed inference as an application delivery and security problem rather than a model selection exercise. The management layer, the systems governing how inference traffic is routed, constrained, observed, and protected, is where operational leverage concentrates. Building that layer deliberately, with converged controls and cross-model observability, is what separates enterprises that will scale AI effectively from those that will spend the next two years firefighting the complexity they chose not to manage when the window was open.

F5 has commercial interest in that argument. The data it rests on is consistent enough, across industries, question types, and organisation sizes, to stand independently. Inference has joined the application stack. The question is not whether enterprises have operationalised it. Most already have. The question is whether they have built the architecture to match what they are running. For 72% of them, the answer is not yet.