CEOs are staking their careers on AI they no longer fully trust

Three in four chief executives believe they will lose their job this year specifically because of AI. They are not talking about someone else.

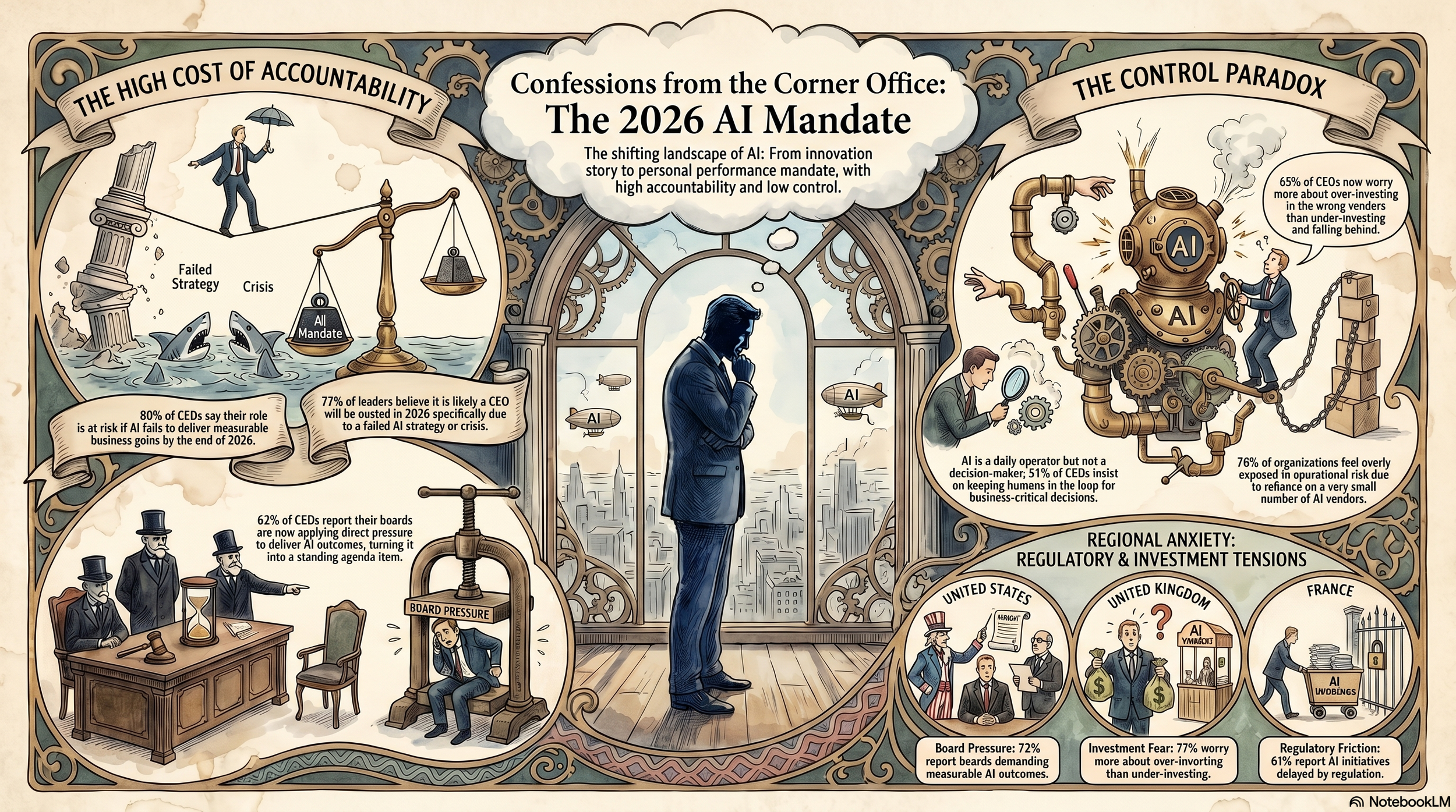

That is the sharpest finding to emerge from Dataiku and The Harris Poll's Global AI Confessions report, a survey of 900 chief executives across eight markets conducted between February and March 2026. Eight in ten say their role is at risk if their organisation fails to deliver measurable business gains from AI before the year is out. The deadline is not a planning horizon. Accountability is not a governance principle. It is, as the survey's own respondents put it, personal: "My job depends on AI, but I'm less certain than ever I'll get it right."

That confession matters most because of when it surfaces. Last year, the dominant anxiety among enterprise leaders was falling behind. This year, something has shifted. The fear is no longer about speed. It is about being held responsible for systems that are already running, already shaping decisions, and increasingly difficult to explain or control. The mandate arrived before the infrastructure was in place to deliver it.

The board has stopped waiting

For the past two years, AI has sat at the edge of the boardroom agenda, discussed as a strategic priority without the performance pressure that typically follows. That has changed. Sixty-two per cent of surveyed CEOs now report active board pressure to deliver measurable AI-driven outcomes, making AI a standing accountability item rather than a strategic discussion. 80% say their AI strategy is very important to investors. The question boards are asking is no longer whether the organisation is investing in AI. It is whether that investment is working.

Into that environment, the confidence data lands with particular force. The proportion of CEOs describing themselves as extremely confident in their ability to deploy AI agents in production and at scale fell from 41% in 2025 to 31% in 2026. Scrutiny is intensifying at exactly the moment conviction is quietly receding. More than half of respondents, 56%, now concede that competitors have deployed AI strategies they consider superior to their own. The public posture holds. The private assessment is more complicated.

The investment picture reflects the same uncertainty. Nearly two-thirds of CEOs (65%) say they now worry more about over-investing in the wrong vendors than about under-investing and falling behind competitors. Twelve months ago, the fear pointed the other way. The shift is not a retreat from AI but a reckoning with a market that has produced neither a clear leader nor reliable signals about where value is genuinely being created. 76% say their organisation is overexposed to strategic and operational risk due to reliance on too few vendors. The deeper the commitment, the harder it has become to feel certain the bets are right.

The gap between owning strategy and controlling it

Spend time with the survey's data on decision-making, and a specific structural problem comes into focus. 70% of CEOs identify themselves as the primary driver of AI strategy within their organisation. That figure sits well ahead of CIOs at 16%, Chief Data Officers at 6%, and IT departments at 5%. On paper, the chain of command runs directly to the top.

The operational reality is considerably more fragmented. Only 60% of these same CEOs say they participate in more than half of AI-related decisions. Just 6% are involved in nearly all of them. The choices that actually determine AI outcomes, vendor selection, deployment architecture, model configuration, governance design, run through CIOs, data leaders, technical teams, and external partners. Two-thirds of respondents said they had questioned or challenged AI vendor or platform decisions made by their CIO or other team members in the past year, with nearly a quarter doing so more than once. That is not oversight. It is a correction after the fact.

The report captures the gap in language that functions as its own diagnosis: "I say I own AI strategy — but I'm not the one making the decisions that will determine its success." For CEOs, accountability travels upward even when decision-making power does not. As AI moves from pilot to production, that structure is becoming harder to sustain.

The problem compounds as AI creation spreads beyond centralised teams. Ninety-four percent of surveyed leaders say low- or no-code tools are critical to scaling AI across the workforce. More builders means more decisions, more variability, and a wider surface area of risk that no single executive can see. Ninety-six percent of CEOs believe employees are already using generative AI tools without organisational approval. Forty-two percent estimate that more than half of their workforce is doing so. Shadow AI is not a future concern. It is the current operating condition.

The trust problem has an operational cost

The third confession the survey surfaces is the one that connects the others: "I rely on AI for decisions, but I still don't trust it to act alone."

AI is now influencing an average of 40 business decisions per year at the CEO level, across performance analysis, competitive intelligence, and strategic direction. Nearly all respondents (94%) say they would be comfortable telling their board that AI tools had shaped a strategic recommendation. The integration is real, and we acknowledge it. What has not followed is autonomy. More than half of CEOs (51%) maintain a human approval step for business-critical decisions. Eighty per cent say they at least occasionally request justification for AI-driven recommendations. Only 4% never do.

Every output reviewed and every recommendation held at a human checkpoint is a constraint on the speed and scale at which AI can generate the returns that boards are now demanding. The governance infrastructure required to trust AI to act is still being built. Until it is, the gap between what AI can do and what leaders are willing to let it do carries a growing operational cost. Revenue growth is now cited as the primary measure of AI success by 28% of respondents, up from 16% in 2025, nearly level with productivity improvement at 25%. The benchmark has moved from internal efficiency to market performance. The systems are not yet running at the level required by the benchmark.

The pressure is not evenly distributed

Regional data in the survey reveals different intensities of the same underlying tension. US CEOs face the most concentrated board scrutiny: 72% report active pressure to deliver AI outcomes, up from 61% the previous year, and 33% identify AI as their top business priority, the highest proportion globally. In France, 61% of chief executives say AI initiatives have been delayed by regulatory uncertainty, the highest share of any market and a direct reflection of the EU AI Act's compliance demands reshaping deployment timelines before outcomes can even be measured.

The UAE data carries its own signal. Twenty-three per cent of UAE chief executives say AI is actively jeopardising their long-term legacy, more than double the global average of 10%. Confidence in explaining AI-driven decisions to regulators or courts runs 10 percentage points below the global figure, the lowest of any region surveyed. In a market that has invested heavily in positioning itself as an AI-forward economy, the gap between ambition and the governance needed to protect outcomes is the defining constraint.

Japan presents the clearest expression of scepticism about execution. Only 60% of Japanese CEOs say their AI roadmap accurately reflects operational reality, against 81% globally. Fourteen per cent say they are not confident it does at all, the highest level of expressed doubt across any market in the survey.

Taken together, the data describe not a crisis of investment or intent. It is a crisis of control arriving precisely when control is being demanded. The companies that emerge from this phase in the strongest position will not be those that adopted AI fastest. They will be those whose governance can withstand the scrutiny already underway.