Sovereign AI and EBITDA: How the Gulf’s AI Investment Faces Its Productivity Test

Since 2017, AI in the Middle East has been framed as destiny. National strategies were unveiled with ambitious precision, sovereign wealth funds signalled patient capital, and Abu Dhabi opened a purpose-built AI university. Also, hyperscale data centre capacity rose from desert perimeters with geometric confidence. The region cast itself as an early mover in sovereign compute, a jurisdiction determined not merely to consume global technology cycles but to anchor them.

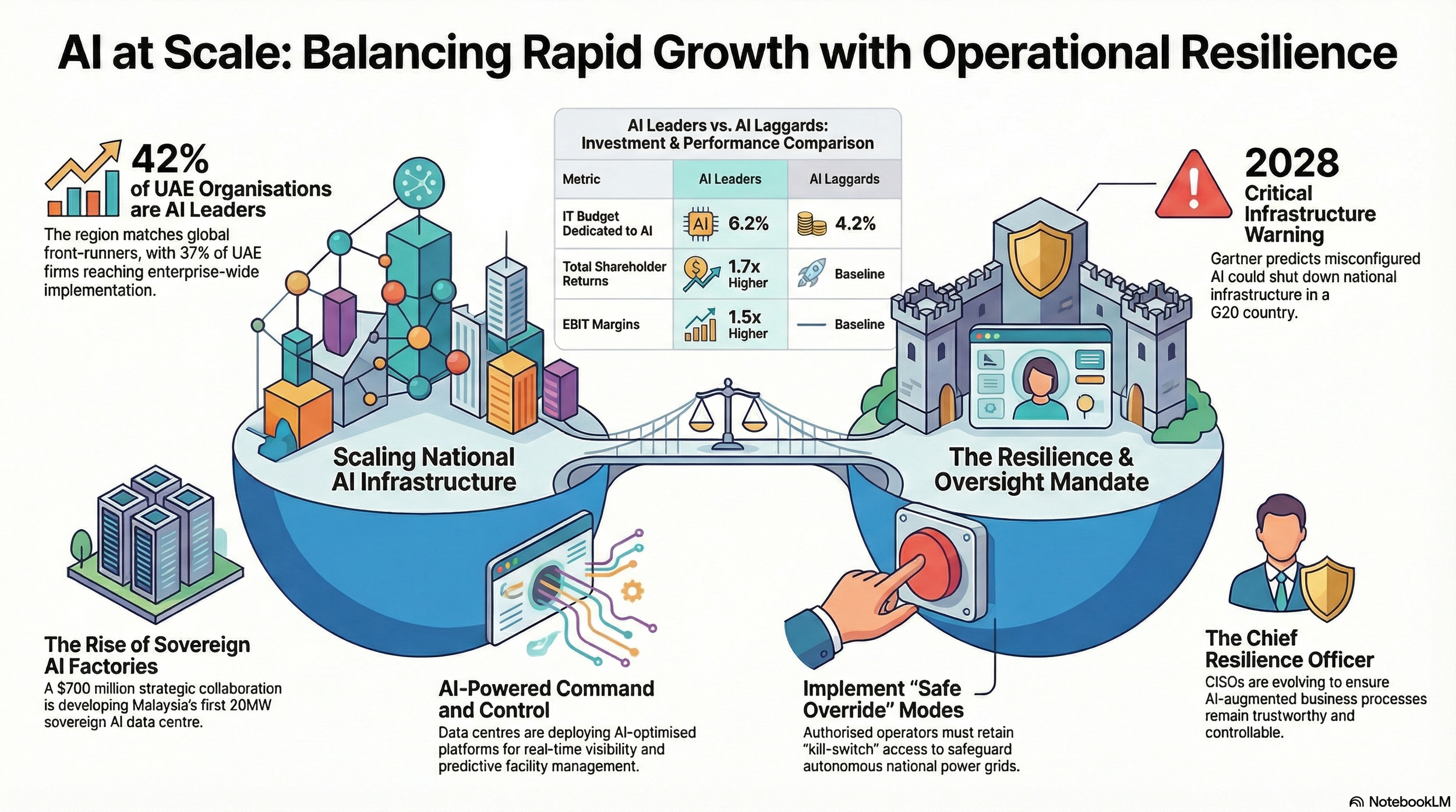

In the two months of 2026, while this narrative continues, the substance has become stronger. Just in the first week two weeks of February 2026 - Magna AI and Zchwantech announced a $700 million, 20MW sovereign AI data centre in Sarawak, Malaysia. Khazna Data Centres confirmed a long-term, commercially significant agreement with Presight to embed AI directly into the operational command systems that manage its hyperscale facilities.

Boston Consulting Group (BCG) reported that 42% of UAE organisations now qualify as AI Leaders delivering up to 1.7 times higher total shareholder returns and 1.5 times higher EBITDA margins compared to AI Laggards.

Gartner predicted that misconfigured AI could shut down national critical infrastructure in a G20 country by 2028. Mohamed bin Zayed University of Artificial Intelligence (MBZUAI) launched The Academy while graduating senior policymakers and corporate leaders trained in AI governance.

We realise that the infrastructure phase has largely been executed – the steel, silicon and fibre are in place. The next phase asks a harsher question: Does that infrastructure generate durable economic output?

The $700 Million Variable: Capacity Is Not Value

A 20-megawatt AI data centre is not a marketing exercise. It is a very expensive, long-term financial commitment.

When a country or company builds something at that scale, the costs do not stop once construction is complete. The facility consumes vast amounts of electricity every single day. The cooling systems must run constantly to prevent overheating, especially in warm climates. The specialised chips that power AI systems become outdated quickly as new versions are released, which means expensive upgrades are needed every few years. Loans must be repaid, equipment must be maintained and the staff must be paid.

All of those costs continue whether the data centre is being used heavily or sitting half empty.

The new facility in Sarawak, backed by Magna AI and Zchwantech, has been described as a “sovereign AI factory” designed to process data, train AI models and support national and enterprise needs.

Dr Moataz BinAli, CEO of Magna AI, explained the ambition clearly: “Malaysia is at an inflexion point, with the opportunity to lead the region in sovereign AI capabilities. Through this collaboration, we are building a secure and scalable AI infrastructure that supports national resilience and long-term economic growth.”

The strategic idea is easy to understand. If a country builds its own AI infrastructure, it does not have to rely as heavily on foreign technology providers. It keeps control over its data and computing power. But the financial reality is more demanding.

Owning the infrastructure only makes economic sense if it is used extensively and consistently. Servers that are switched on but underused still consume electricity. Equipment that sits idle still loses value over time. If businesses, government agencies and industries do not actively use the computing power for real projects — from healthcare systems and financial services to logistics and energy management — then the facility becomes an expensive asset that generates little return.

That is why the conversation in the Gulf has shifted. The focus is no longer just on how quickly new AI facilities can be built. It is on how effectively they can be filled with meaningful work. In simple terms, success is no longer measured by how big the infrastructure is, but by how much real value it produces.

AI Running the Infrastructure That Runs AI

In the UAE, Khazna’s agreement with Presight illustrates what integration looks like inside the system itself. Khazna signed what it described as a long-term, commercially significant contract to deploy an AI-optimised facility management solution and a centralised command and control centre across its data centre portfolio.

Hassan Alnaqbi, CEO of Khazna, explained the operational shift in pragmatic terms: “This collaboration strengthens our ability to operate complex, mission-critical infrastructure with greater visibility, resilience and efficiency. The deployment of Presight’s AI-driven platform and centralised command and control centre supports our commitment to operational excellence and sustainable growth as we continue to expand our global data centre network.”

Thomas Pramotedham, CEO of Presight, positioned the deployment as a structural evolution: “By embedding intelligence into data centre operations, we are enabling greater resilience, efficiency and sustainability at scale. Our work with Khazna demonstrates Presight’s ability to translate advanced AI capabilities into scalable platforms that deliver lasting operational value as digital infrastructure continues to grow in size, complexity and strategic importance.”

The system integrates operational technology and facilities management data into a unified intelligence layer capable of predictive maintenance, proactive fault detection and digital twin modelling. Data centres supporting AI workloads are among the most energy-intensive digital assets in any economy.

In climates where ambient temperatures remain high for much of the year, cooling infrastructure accounts for a significant share of operating expenditure. Even fractional improvements in power usage effectiveness ratios compound across thousands of servers operating continuously.

If predictive systems reduce unplanned outages, repair costs decline and service-level agreements stabilise. If digital twin simulations allow operators to test configuration changes before live deployment, systemic risk is reduced. The financial outcome rarely appears as a headline; it materialises quarter by quarter in operating margins and avoided downtime.

In this environment, AI does not sit on top of infrastructure as a feature. It governs infrastructure as a control layer.

Training Authority, Not Just Engineers

When AI is built deeply into systems, it is not enough to install the machines quickly; the real challenge is helping the people and organisations that run those systems understand the systems well enough to use them properly, because changing how institutions think and operate always takes far longer than setting up the hardware.

At MBZUAI - ‘The Academy’ was launched alongside the graduation of the sixth cohort of its Executive Program, which has trained more than 200 senior leaders across government and industry.

Spring Chunxiao Fu, MD of the MBZUAI Academy, described the mandate with expansive ambition: “The launch of The Academy reflects the mission of Mohamed bin Zayed University of Artificial Intelligence to move AI beyond the laboratory and into the heart of society. By bringing together the world’s greatest minds - from the artists in our new fellowship to the leaders in our executive programmes - we hope to shape the narratives and elevate the global conversation beyond technology to purpose and impact, while contributing to Abu Dhabi’s ambitious role in the global AI landscape.”

The importance lies not in rhetoric but in authority. Executive Program participants include undersecretaries, chief executives and senior policymakers who control procurement frameworks, budget allocation and regulatory architecture.

AI deployments frequently stall not because models fail but because institutions resist change. Procurement frameworks favour legacy vendors. Data governance remains fragmented. Risk assessment processes slow integration.

Training decision-makers does not eliminate institutional friction, but it shifts the baseline. The AI x Arts Fellowship, linking artists such as Refik Anadol with AI researchers and museums in Abu Dhabi, extends this integration into cultural institutions, influencing public perception and, by extension, policy stability. Economic absorption depends on both technical capability and institutional alignment.

Financial Performance and Capital Discipline

Sustaining early AI-driven performance gains requires a level of operational discipline that goes far beyond pilot projects and proof-of-concept deployments. In the experimentation phase, organisations can tolerate inefficiency, duplication and unclear ownership because the stakes remain relatively contained. Once AI begins to influence core revenue streams, cost structures and infrastructure systems, that tolerance disappears.

As adoption spreads, differentiation no longer comes from having an AI strategy slide or a handful of use cases. It comes from redesigning workflows, restructuring accountability and building robust data governance frameworks. AI systems must be monitored continuously, retrained deliberately and integrated into processes that were never originally designed for automation. Performance gains that emerge during strong economic cycles must prove resilient during periods of volatility, inflationary pressure or tightening capital conditions.

When AI begins to command a structural share of capital expenditure and operating budgets, its performance is no longer evaluated by innovation teams alone. Boards examine their contribution to margin stability. Investment committees analyse return on invested capital.

Chief financial officers compare recurring AI expenditure against measurable output. If EBITDA margins expand, the question becomes whether those gains are durable and repeatable. If they do not, recurring spending must be justified.

The productivity phase introduces accountability because AI stops being an experiment and becomes an operating layer.

When AI Moves From Software to Infrastructure

The warning issued by Gartner should not be interpreted as theatrical alarmism. It reflects a structural change in how AI is being deployed. The firm predicts that by 2028, misconfigured AI in cyber-physical systems could shut down national critical infrastructure in a G20 country. Wam Voster described this possibility with unusual bluntness: “The next great infrastructure failure may not be caused by hackers or natural disasters but rather by a well-intentioned engineer, a flawed update script, or a misplaced decimal.”

The significance of that statement lies in where it locates risk. For years, AI security discussions concentrated on external threats — malicious actors, adversarial inputs and data poisoning attacks. What Gartner highlights instead is internal complexity. As AI becomes embedded inside industrial controls, cooling systems, energy grids and financial transaction engines, configuration errors propagate at machine speed.

A model retrained with slightly incorrect parameters does not affect a single workflow; it can influence thousands of connected assets simultaneously. A software update deployed across distributed systems does not fail quietly; it replicates instantly. Scale amplifies both efficiency and error.

Carl Windsor, Chief Information Security Officer at Fortinet, framed the challenge in operational terms: “As AI increases speed, scale, and dependency across digital environments, resilience must become the organising principle for cybersecurity leadership in 2026 and beyond.”

Speed now means automated execution without sequential human review. Scale means that a single configuration choice affects entire networks of systems. Dependency means organisations rely on these AI systems as default decision layers rather than advisory tools.

In hyperscale data centres, AI regulates cooling optimisation, workload balancing and predictive maintenance scheduling. In national energy grids, similar systems optimise load distribution. In banking networks, automated decision engines assess credit risk and detect fraud in real-time. Each of these applications delivers efficiency improvements. Each simultaneously increases the consequences of misconfiguration.

The safeguards required in such environments are neither symbolic nor optional. Parallel monitoring systems must validate AI outputs. Human override mechanisms must remain available. Software updates must be rolled out in stages rather than deployed universally. Digital twin environments must simulate changes before live execution. Version control processes must be precise and auditable.

These measures demand additional capital and institutional discipline. They do not reduce productivity; they protect it. When AI shifts from being an application layered on top of infrastructure to becoming an integral part of it, operational stability and economic performance become inseparable. A system that improves energy efficiency by a few percentage points but introduces systemic shutdown risk is not efficient. It is fragile.

The Global Context: Capital, Regulation and India’s Scale

The Gulf’s AI integration is unfolding within a broader global competition to translate heavy investment into sustainable productivity without destabilising essential systems.

In the United States, hyperscale technology companies are investing tens of billions of dollars into AI-specific infrastructure, from GPU clusters to advanced inference platforms. Public markets initially rewarded rapid expansion, but investor scrutiny has sharpened.

Analysts now examine capital expenditure intensity, hardware depreciation cycles and the speed with which AI services convert into recurring revenue streams. In this model, capital markets impose discipline swiftly; if returns lag expectations, valuation adjusts accordingly.

Europe operates under a different constraint. AI deployment is embedded within a formal regulatory architecture shaped by the European Union’s AI Act and related compliance frameworks. Transparency, explainability and risk classification influence the pace of implementation. The result is often more cautious scaling, where governance legitimacy is prioritised even if time-to-market slows.

China aligns AI directly with its industrial strategy. Deployment is integrated into manufacturing optimisation, logistics coordination and state-led industrial policy. Productivity gains are assessed in terms of output efficiency and strategic capacity rather than quarterly earnings.

India presents yet another structural configuration. Its digital public infrastructure, including Aadhaar and the Unified Payments Interface, created one of the world’s largest real-time digital ecosystems. AI increasingly builds on that foundation across financial inclusion, healthcare diagnostics, agricultural advisory systems and multilingual language technologies tailored to a population of vast diversity.

At the same time, India’s IT services sector confronts a structural transition. Automation through AI reshapes traditional outsourcing models built on labour scale. The economic question in India is not only about compute capacity, but about workforce transformation across millions of technology professionals. Productivity gains must be balanced against employment stability.

The Gulf sits between these models. It deploys capital rapidly, often with sovereign backing that softens immediate shareholder pressure. It aligns infrastructure, education and enterprise policy in compressed timelines. At the same time, it remains deeply connected to global semiconductor supply chains, energy markets and international capital flows. Its labour ecosystem is closely interwoven with India’s, as Indian engineers and developers constitute a significant component of Gulf technology operations.

Energy economics further bind these regions. AI workloads consume immense amounts of power. In the United States, grid expansion must accommodate data centre growth. In Europe, sustainability mandates shape infrastructure planning. In India, grid reliability and renewable integration influence scaling. The Gulf benefits from significant energy resources, yet cooling requirements and sustainability commitments still define cost structures. AI-driven optimisation inside hyperscale facilities can offset computational intensity only if efficiency gains keep pace with accelerating demand.

Every region faces a version of the same test. In the United States, the challenge is sustaining margins under investor scrutiny. In Europe, it is innovating within regulatory constraints. In China, it is aligning AI with long-term industrial capacity. In India, it is managing workforce transition at a population scale. In the Gulf, it is absorbing large-scale sovereign AI infrastructure into enterprise systems and public services quickly enough to generate durable economic returns.

In the Gulf, the timeline between investment and accountability is shorter than in many other regions. Capital has been deployed quickly, infrastructure has been built at speed, and integration is already underway.

If sovereign data centres are heavily utilised, it will signal real demand from government and enterprise. If energy efficiency improves while AI workloads scale, it will indicate that optimisation is working rather than eroding margins. If corporate earnings continue to show stronger EBITDA performance over multiple cycles, it will suggest that AI is contributing structurally to productivity rather than temporarily inflating results.

This is the shift the region is now entering. The conversation is no longer about intent or positioning; it is about proof. The Middle East has moved beyond declaring that it intends to lead in artificial intelligence. It is now in the more exacting phase of demonstrating that the systems it has financed and deployed can deliver sustained economic value while maintaining operational resilience. Ambition has established the foundation now integration will determine whether that foundation supports long-term growth.