Closing the Complexity Gap:Resilience, Sovereignty, and the New Cloud Security Imperative

“Meeting after meeting, keynote speech after keynote speech, I realise that the pursuit of 100% prevention has become an anachronism.” That is how Alain Sanchez, Fortinet’s EMEA CISO, captures the growing consensus among cybersecurity leaders in his article. The combination of systemic complexity, AI-driven threats, and nation-state-level sophistication makes the total avoidance of incidents not only impossible but, as he argues, a dangerous concept.

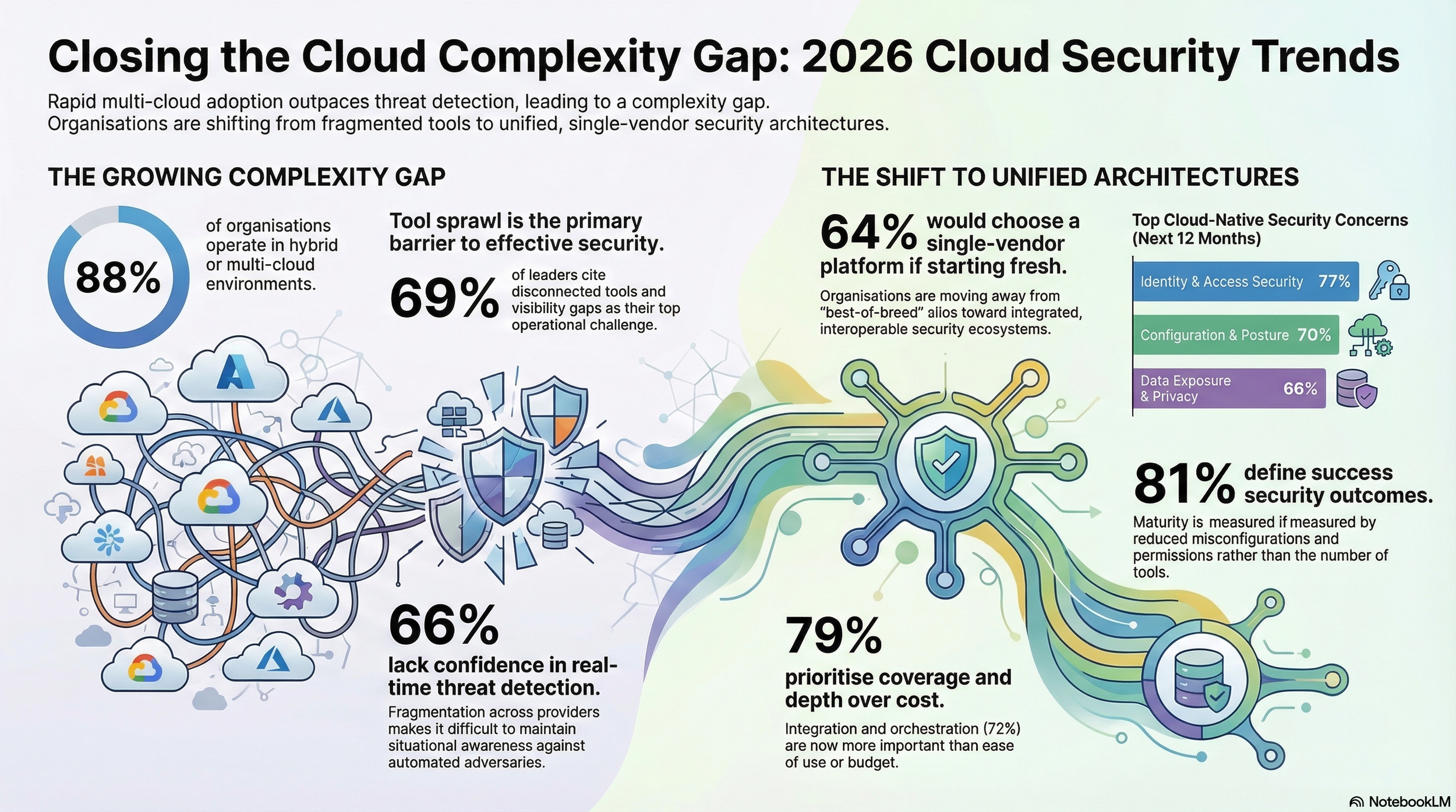

Hard data now supports that view. The 2026 Fortinet Cloud Security Report, based on a survey of 1,163 senior cybersecurity leaders worldwide, reveals that 66% of organisations lack strong confidence in their ability to detect and respond to cloud threats in real time. The gap between what enterprises need and what their security architectures can deliver is widening. Not for want of investment, but because the underlying model is structurally mismatched to the speed and complexity of modern cloud environments.

This article synthesises the findings from both sources to explore three interlocking dimensions of that mismatch: the operational complexity of multi-cloud estates, the talent and automation shortfalls that compound it, and the emerging doctrines of resilience and sovereignty that are reshaping how organisations must think about defence.

The Multi-Cloud Reality: Complexity as the Default

Cloud environments are no longer built around a single provider or a clear boundary. The survey confirms that 88% of organisations now operate across hybrid or multi-cloud environments, up from 82% just a year earlier. Of these, 81% rely on two or more cloud providers for critical workloads, and 29% use more than three. This is not an intermediate architectural stage. It is the de facto operating model for the enterprise.

Each new provider, service, or identity introduces additional configurations, permissions, and data paths. Assets shift constantly, non-human identities proliferate, and sensitive data traverses services and regions as a matter of routine. The attack surface does not merely expand; it fragments. And fragmentation, the report finds, has become the single most significant operational barrier to effective cloud security.

Sixty-nine percent of organisations cite tool sprawl and visibility gaps as the top factor limiting their security effectiveness. Rather than reducing risk, security teams spend disproportionate time navigating multiple consoles and manually correlating alerts across systems that were never designed to work together. Detection confidence is eroding as a result: two-thirds of respondents report insufficient confidence in their real-time threat detection and response capabilities, a figure that has risen year on year.

The Budget–Maturity Paradox

If complexity were simply a spending problem, the outlook would be more encouraging. Sixty-two percent of organisations expect their cloud security budgets to increase over the next twelve months, and cloud security now accounts for 34% of total IT security spending on average. Yet increased investment has not translated into proportionate gains in maturity or confidence. Fifty-nine percent of organisations still rate their cloud security posture at the two lowest tiers on a five-stage maturity scale: “initial” or “developing.”

The report identifies a clear mechanism behind this paradox. Each new tool adds complexity, including integration work, console management, and decision fatigue for already-stretched teams. Returns diminish when the underlying architecture requires manual correlation across systems that lack shared context. Much of the rising expenditure is absorbed not by measurable improvements in security outcomes, but by the operational overhead of managing disconnected tools.

Sanchez captures in his article exactly why this happens. The traditional security model, he writes, creates “a false sense of protection—a fortress mentality designed to keep the adversary out.” When environments change faster than controls can follow, that fortress is built on shifting sand.

Complexity Outpaces Talent

Compounding the architectural challenge is a persistent workforce shortage. Seventy-four percent of organisations report an active shortage of qualified cybersecurity professionals, and 77% express high concern about the industry-wide skills gap. These shortages are especially acute in cloud-specific roles, where expertise must span infrastructure, identity, data, and application layers.

Disconnected tools generate a volume of alerts that far exceeds the capacity of understaffed teams. Analysts spend critical hours manually correlating data across consoles, time that should be devoted to higher-value analysis. Policies drift, requiring tuning across multiple platforms, and the operational burden grows whilst the workforce remains stagnant.

The result is a reactive posture. Overextended teams default to alert-driven workflows, triaging what they can and accepting that some signals will inevitably be missed. Proactive threat hunting, architecture improvement, and automation refinement are deprioritised for lack of capacity. Hiring alone cannot close this gap. Even organisations that successfully recruit face lengthy onboarding cycles whilst cloud environments evolve daily.

Where Cloud Risk Concentrates

The report finds that cloud security risk is concentrated in a small number of recurring areas. Identity and access security ranks as the top concern (77%), followed by misconfigured cloud services (70%) and data exposure risks (66%). These three categories consistently overshadow other threats, including workload exploits and supply-chain attacks.

Crucially, these risks form what the report describes as an “exposure chain.” A storage bucket misconfiguration may appear low priority on its own. Combined with an overprivileged service account and a database containing customer records, however, it becomes a direct path to a breach. Most organisations manage these domains independently: posture management tools catch misconfigurations, identity tools flag excessive permissions, data security tools classify sensitive assets. Each sees its own segment clearly, but none sees how the segments combine.

Adversaries actively exploit this gap, using automation and AI to map identity paths, discover misconfigurations, and identify exposed data faster than siloed defences can correlate the signals.

The AI Arms Race and the Automation Gap

Automation has been widely adopted in cloud security workflows, but a critical gap persists between alerting and action. Thirty-seven percent of organisations describe their automation as largely alert-focused, identifying potential issues but leaving remediation to manual processes. Only 11% report fully autonomous remediation capabilities.

The picture is similarly early-stage for AI-driven detection. Thirty-two percent report that their AI adoption is limited to pilot efforts; just 18% describe AI-driven detection as fully operational across their cloud environments. The majority still rely on human-paced workflows to defend environments that change continuously.

Attackers face no such constraints. As AI tools enable them to scan for misconfigurations, map permission paths, and identify exposed data at machine speed, the window between exposure and exploitation continues to compress. The challenge is no longer whether threats can be detected, but whether they can be contained fast enough to prevent damage.

From Security to Resilience: A Philosophical Shift

It is against this backdrop of fragmented architectures, overstretched teams, and adversaries operating at machine speed that the concept of resilience has moved from aspiration to operational necessity.

Sanchez captures in his article the philosophical weight of this transition. “Security, in its traditional sense, creates a false sense of protection,” he writes. “Resilience, by contrast, is about ensuring operational continuity when the walls have been, even slightly, breached. It carries a bit more modesty—the acknowledgment that the breach is inevitable, and the true measure of success lies in the speed and efficacy of the recovery.”

He defines this new paradigm through three core capabilities. The first is Anticipatory Response: learning from a live attack as it unfolds, using the attacker’s own moves to predict where the system might fail next and having recovery tools ready before the damage spreads. The second is Managed Degradation: the strategic decision to maintain a defined set of critical services whilst assuming other parts of the network may be compromised, ensuring that vital functions such as financial transactions, power grid control, or patient care remain operational even at reduced capacity. The third is Rapid Restoration: shifting the organisational mindset from “if we are ever hit” to “how fast can we bounce back,” measured by Recovery Time Objective and underpinned by immutable data backups and tested recovery playbooks.

Sanchez draws an illuminating medical analogy to make the concept tangible. “Just as an organism is exposed to a weakened virus to learn and build a controlled, informed immune response,” he writes, “the resilient enterprise uses the very essence of an attack to its advantage.” Far from being a weakness, this approach turns an actual compromise into a learning event, allowing the system to understand the threat more deeply and trigger informed, controlled recovery.

The Critical Infrastructure Imperative

Whilst the shift to resilience is a strategic trend for most organisations, it is rapidly becoming a legal and regulatory obligation for operators of critical infrastructure. Governments are declaring that the ability to withstand and recover from disruption is a matter of national security, assigning the obligation to be resilient to private operators.

This regulatory impetus is mirrored by powerful economic consensus. The World Economic Forum’s 2026 report indicates that 92% of CEOs now prioritise cyber recovery capabilities over traditional perimeter defence spending. Major cyber-insurers have begun implementing “Resilience Audits,” with premiums weighted not solely by breach occurrence but by an organisation’s Recovery Time Objective and the immutability of its data. The OECD has emphasised that ensuring critical infrastructure resilience requires new governance models that limit service disruptions and promote cross-sector collaboration.

As Sanchez observes, this shift cannot succeed through regulation alone. It relies on deep public-private partnerships. “By aligning the government’s security intelligence with the private sector’s operational expertise,” he writes, “these collaborations ensure that sovereignty mandates are both technically feasible and economically sustainable.”

Cloud Sovereignty and Local Control

Resilience is now inextricably linked to technological independence. To meet stringent regulatory requirements, new infrastructure models are emerging. Sovereign cloud partitions offer environments that are physically and logically isolated, with governance structures shielded from foreign jurisdictions, ensuring the control plane for critical data remains within required legal and physical boundaries. Sovereign edge computing, meanwhile, integrates security and processing directly at the network edge, ensuring sensitive industrial data is processed locally before reaching the public internet.

These developments reflect a convergence of the resilience and sovereignty agendas. The ability to maintain operational continuity during an incident increasingly depends on controlling where data resides, who governs it, and how quickly it can be restored within legally defined sovereign boundaries.

Platform Consolidation: The Industry’s Response

The survey data suggests that organisations are responding to these pressures by reassessing their security architectures. When asked how they would build their security strategy if starting afresh, nearly two-thirds (64%) say they would choose a single-vendor platform that unifies network, cloud, and application security. Only 27% would continue with a best-of-breed approach involving disparate, function-specific tools managed independently.

This preference reflects operational exhaustion. Security teams are seeking fewer platforms that can share telemetry, policy, and context across domains. Importantly, unification does not imply a single monolithic product. It points to a demand for open, interoperable platforms that integrate through shared data models and coordinated enforcement.

When evaluating cloud security platforms, buyers prioritise coverage and depth across cloud environments (79%), integration and orchestration across security domains (72%), and automation and compliance capabilities (68%), all of which supersede ease of use or cost considerations. The emphasis is on operational synchronisation and outcomes, not simply reducing the number of tools.

The Autonomous Frontier

The technological response to the resilience mandate is manifesting in the rise of autonomous resilience agents and self-healing networks. These move beyond simple blocking mechanisms. They are designed to allow a suspected attack to proceed in a sandboxed environment, automatically generating and distributing immunity signatures across the entire infrastructure.

Sanchez captures in his article the significance of this shift. “Instead of failing to prevent the attack, the system uses the attack itself as a data point to rapidly learn, adapt, and restore,” he writes. It is, in his framing, the ultimate expression of Managed Degradation: turning a localised compromise into a global defence advantage.

Conclusion: The Architect of Continuity

The convergence of operational data and strategic doctrine paints a coherent picture. Multi-cloud complexity is growing faster than security teams can manage it. Budgets are rising, but maturity lags. Talent shortages force reactive postures. Attackers exploit the seams between siloed tools. And regulators are raising the stakes, particularly for critical infrastructure operators, by mandating resilience and sovereignty as non-negotiable obligations.

Five principles emerge from the data for organisations seeking to close the complexity gap: treat visibility as a foundation; reduce fragmentation through consolidation; connect identity, configuration, and data risk domains rather than assessing them in isolation; automate for outcomes rather than alerts; and extend integration beyond cloud boundaries to encompass network, SaaS, and endpoint visibility.

As Sanchez writes, “The CISO’s mission is transforming from being the gatekeeper of the fortress to the architect of continuity. The focus is no longer on the impossible task of preventing every single attack, but on building systems that are inherently adaptive, capable of absorbing shocks, and designed for rapid, assured recovery within legally defined sovereign boundaries.”

In this new environment, the resilient and sovereign organisation is the one that can take the hit, learn from the experience, maintain what matters most, and move forward with minimal disruption. That is the standard to which the industry is now being held.