The AI That Hunts Bugs: How Claude Code Security Can Reshape the Cybersecurity Landscape

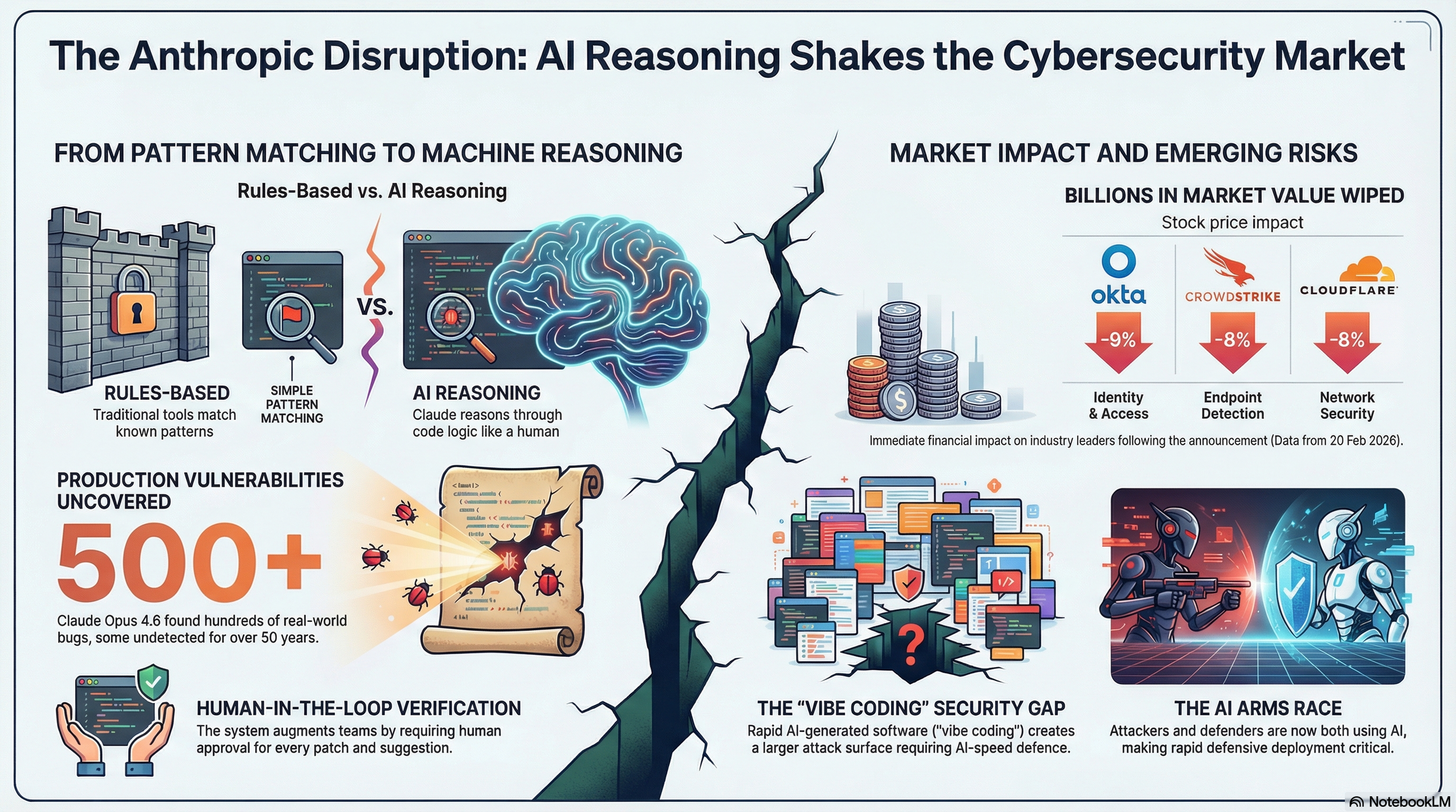

On February 20, 2026, Anthropic quietly detonated a grenade in the cybersecurity industry. The announcement was measured in tone, put out as a new research preview, a limited rollout, and responsible disclosure language, but the market reaction was anything but quiet. Within hours, cybersecurity stocks collapsed across the board, and the world began asking a serious question: has AI just made an entire category of professional software obsolete?

Before we dive into that, let us see what Claude Code Security is. It is a new capability embedded directly into Claude Code on the web. It is designed to scan entire software codebases for security vulnerabilities and suggest targeted patches — all subject to human review before anything is applied. Anthropic began rolling it out as a limited research preview to Enterprise and Team customers on February 20, with expedited access offered to maintainers of open-source repositories.

To understand why this matters, you need to understand what came before it. Traditional security scanning tools — known as static analysis tools — work by matching code against a database of known vulnerability patterns. They are good at catching obvious mistakes: exposed passwords, outdated encryption libraries, and hardcoded credentials. But they are fundamentally rule-based, and rules can only catch what their authors anticipated.

Claude Code Security takes a fundamentally different approach. Rather than matching patterns, it reads and reasons about code the way a skilled human security researcher would — tracing how data moves through a system, understanding how components interact, and identifying flaws in business logic and access control that rule-based tools routinely miss. It is, in other words, not just faster pattern matching - it is something closer to machine intuition applied to code.

The tool includes a multi-stage verification process: each finding is re-examined by the model before it surfaces to an analyst, complete with a confidence rating and a severity score. Nothing is patched automatically. Every fix requires human approval. The system is designed, Anthropic insists, to augment security teams — not replace them.

500 Vulnerabilities That Should Not Have Been There

The headline figure from Anthropic's announcement is striking. Using Claude Opus 4.6 - released just two weeks prior on February 5, 2026 - the company's internal Frontier Red Team found over 500 vulnerabilities in production open-source codebases. Not theoretical vulnerabilities, not edge cases discovered in lab conditions - but real bugs, in real code, running in real production environments.

What makes this particularly alarming and significant is how long many of these bugs had been hiding. Some had gone undetected for decades, despite years of expert human review. Anthropic says it is now working through triage and responsible disclosure with the affected maintainers.

This is not a marginal improvement in detection capability. If a model can surface 50-year-old bugs in well-reviewed open-source codebases in the course of a research exercise, the implications for software that has never received such scrutiny are profound. The total universe of undetected, exploitable vulnerabilities in production code is unknowable — but it is almost certainly much larger than the industry has assumed.

The Frontier Red Team has been building toward this capability for over a year — entering Claude in competitive Capture-the-Flag cybersecurity events, partnering with Pacific Northwest National Laboratory to experiment with AI-assisted defence of critical infrastructure, and systematically stress-testing the model's ability to find and patch real vulnerabilities. The 500+ bug discovery was not a lucky accident. It was the output of a deliberate research program that is now being productized.

The Market Reacts: Billions Wiped in Hours

The cybersecurity industry did not wait for analysts to weigh in. Within hours of Anthropic's announcement, a wave of selling swept through the sector that would have been difficult to explain as a coincidence.

CrowdStrike — the dominant player in endpoint detection and response, and one of the most closely watched names in enterprise security — fell approximately 7-8% on the day. Okta, which specialises in identity and access management, dropped more than 9%. SailPoint, which focuses on identity governance, shed over 9% as well. Cloudflare, which provides network security and DDoS protection, fell close to 8%. The Global X Cybersecurity ETF — a diversified basket of cybersecurity stocks — closed at its lowest level since November 2023.

To put it plainly: the market wiped billions of dollars in value from cybersecurity companies in a single session, on the day an AI company announced it could find software bugs. The signal investors were sending is clear - if AI can now perform core security research tasks, the addressable market for specialised security software and services has just been put under serious scrutiny.

The selloff coincided with an important contextual note from SiliconAngle's analysis: Claude Code Security is rolling out approximately four months after OpenAI released Aardvark, its own cybersecurity automation tool. This is not a single disruption event; it is a pattern — two of the world's leading AI labs are now converging on cybersecurity as a high-value application domain, and incumbent vendors are caught in the crossfire.

Why This Is Different from Every Security Tool Before It

Security software has used AI before — but in a much more limited way. Think of it like a bouncer working from a list of banned faces. If your name is on the list, you don't get in. But if it's a new face the bouncer has never seen? You walk straight through. That's how most AI security tools have worked: they get shown thousands of known threats, they learn to recognise those specific threats, and they flag anything that looks the same. Powerful, but limited. They can only catch what they've already been taught to look for.

Claude Code Security works differently. Instead of matching against a list, it actually reads and thinks through the code — the same way a skilled human security expert would. It follows the logic of a program from start to finish, asks whether the assumptions the developer made actually hold up, and spots places where something could go wrong that no one has ever flagged before. It does not need to have seen that specific type of problem previously to recognise that something is wrong.

That is the leap. Not faster pattern matching — genuine reasoning. And the 500+ bugs found in code that expert humans had reviewed for years is the clearest evidence yet that the leap is real. Some security researchers argue AI still needs humans for the most complex threats. That argument gets harder to make every time a decades-old bug surfaces that no human ever caught.

The Problem Nobody Wants to Say Out Loud

Here is the uncomfortable reality sitting underneath this announcement: the same AI that finds a bug so it can be fixed can also find a bug so it can be exploited. A tool powerful enough to help a security team patch a weakness is, in the wrong hands, a tool powerful enough to help an attacker find that weakness first.

Anthropic's answer to this is a careful rollout — limited access, a requirement that users only scan code they actually own, and a system where no fix is ever applied without a human signing off on it. The goal is to make sure the people doing the finding are the people trying to protect systems, not attack them.

But here is the harder truth: nation states and criminal hacking groups do not need Anthropic's permission to use AI for attacks. Google has already reported that government-backed hackers are using AI tools for reconnaissance — scoping out targets before they strike. The race between AI-powered attackers and AI-powered defenders is not coming. It is already happening. Anthropic bets that getting powerful defensive tools into the market fast is the right call — even knowing that the same underlying technology exists on the other side of the fence.

What It Means for Cybersecurity Companies

There is an entire category of security companies — Veracode, Checkmarx, Snyk, Synopsys — whose core business is scanning code for vulnerabilities. That is, more or less, exactly what Anthropic has now built into Claude Code and bundled in for Enterprise customers at no extra charge. If developers are already using Claude to write code, and Claude can now check that same code for security problems in the same window, the case for paying separately for a standalone scanning tool becomes a much harder sell.

CrowdStrike's business is different — it monitors computers and devices in real time, looking for signs of an attack that is already underway. Claude Code Security operates before any of that, at the point where code is still being written. These are genuinely separate problems. But investors did not make that distinction on the day of the announcement. The reasoning appears to be simpler and more sweeping: if AI can do security work at this level, every company charging premium prices for security expertise is exposed. CrowdStrike fell nearly 8% not because Claude directly replaces it, but because the market is asking a bigger question about the whole sector.

Okta and SailPoint manage who gets access to what inside a company — making sure the right people can log in and the wrong people cannot. Claude Code Security does not touch that problem at all. And yet both stocks dropped over 9%. The honest explanation is that fear does not stay contained to its source. Investors are looking at the cybersecurity sector as a whole and asking: how much of what these companies do can AI eventually absorb? Nobody has a clean answer to that yet — and in markets, uncertainty gets sold.

One of the more quietly significant elements of Anthropic's announcement is its offer of expedited, free access to open-source repository maintainers. Open-source software is foundational infrastructure for virtually every piece of enterprise technology in the world. It is also chronically under-resourced from a security perspective.

The 500+ vulnerabilities found in Anthropic's internal research were in open-source codebases. Some had been sitting there for decades. If Claude Code Security can systematically work through the open-source ecosystem and responsibly disclose what it finds, the downstream security benefits could be substantial — and extend far beyond the Enterprise customers paying for the service.

This is also, of course, an exceptionally effective marketing strategy. Every vulnerability responsibly disclosed from an open-source project becomes a case study. Every patched bug that could have been exploited becomes a proof point. Anthropic is seeding the ground for a much larger commercial play by demonstrating capability in the most visible, most scrutinised code in existence.

The Vibe Coding Problem

There is a second, forward-looking dimension to this announcement that deserves equal attention. Anthropic's announcement explicitly references the rise of what the industry has taken to calling "vibe coding" — the practice of using AI to generate software rapidly, often by people without deep programming backgrounds.

Vibe coding has democratized software creation in genuinely remarkable ways. It has also created a looming security problem. Code generated quickly by AI models and deployed by people who may not have the expertise to audit it is code that is likely to contain more vulnerabilities, not fewer. As this type of software proliferates, and it is proliferating rapidly, the attack surface of the internet expands with it.

Anthropic bets that an embedded vulnerability scanner is the natural complement to an embedded coding assistant. If you are going to use AI to write your code, you should also use AI to check your code for security issues before it causes harm. This is a coherent product vision — and it positions Anthropic not just as a coding tool vendor but as an end-to-end secure software development platform.

What Comes Next

Claude Code Security is, by Anthropic's own description, a limited research preview. It is not a finished product. The company is being deliberate about this — and appropriately so, given the stakes. A vulnerability scanner that surfaces false positives at scale, or that itself contains exploitable weaknesses, would be worse than no scanner at all.

The obvious next frontier — flagged by SiliconAngle- is integration with CI/CD pipelines: the continuous integration and continuous delivery systems that most enterprise engineering teams use to ship software. Today, Claude Code Security operates as a scan-on-demand tool. Tomorrow, it could sit inside the deployment pipeline and block releases that contain critical vulnerabilities before they ever reach production. Established cybersecurity companies already offer this capability. If Anthropic builds it or enables it, the competitive implications deepen further.

Anthropic also indicated it intends to expand its security work with the open-source community. That means more vulnerability discovery, more responsible disclosure, and more evidence accumulation — all of which will compound the already formidable demonstration effect of the 500+ bugs found to date.

The Bigger Picture: Competition Is Not the Only Play

Anthropic ended its announcement with a sentence that deserves to be read carefully: "We expect that a significant share of the world's code will be scanned by AI in the near future." This is not a hope or an aspiration. It is a prediction about where the industry is heading, stated with the confidence of a company that believes it is at the front of that wave.

The market's instinct was to read that as a threat — and for good parts of the cybersecurity industry, it is. But the more interesting long-term story may not be displacement. It may be convergence.

Consider what each side actually has - Anthropic has a reasoning engine that can read code and surface vulnerabilities with the kind of nuance that rule-based tools have never managed. CrowdStrike has decades of threat intelligence, endpoint telemetry from millions of devices, and deep institutional knowledge of how attacks actually unfold in the real world. Cloudflare sits at the network layer, seeing traffic patterns across a significant slice of the internet in real time.

Okta holds the identity graph — who is accessing what, from where, and when. Snyk and Veracode are embedded in developer workflows that enterprises have spent years building habits around.

None of those things is what Anthropic has. And a vulnerability found in source code is only part of the picture — you also need to know whether it is being actively targeted, whether the identity accessing it is behaving normally, and whether the network traffic around it looks clean. That is a different kind of intelligence, and it lives in different systems.

The most powerful version of what AI-assisted security could look like is not a single tool doing everything. It is Anthropic's reasoning layer sitting inside the pipelines that companies like CrowdStrike, Cloudflare, and Palo Alto Networks already operate - contributing its ability to understand code and context, while drawing on the threat data, network visibility, and identity intelligence that the incumbents have spent years accumulating.

Some of this is already happening - Cloudflare has been building AI-native security products and working with foundation model providers to enhance its detection capabilities. CrowdStrike's Charlotte AI is an attempt to bring conversational AI into the security operations centre. The direction of travel across the industry is the same: AI reasoning applied to security data at scale.

The question is whether these companies become competitors or collaborators, and the honest answer is probably both, depending on the layer. At the code and development level, Anthropic is moving into territory that specialist vendors have owned. At the network, endpoint, and identity layers, the incumbents have structural advantages that are not easy to replicate.

A world where Claude Code Security catches the vulnerability before deployment, Cloudflare catches the anomalous traffic at the edge, CrowdStrike catches the lateral movement on the endpoint, and Okta flags the compromised identity trying to exploit it — that world is more secure than any one of those tools operating alone.

The cybersecurity problem has never been solved by any single company. It will not be solved by AI either. But AI changes what is possible at every layer — and the companies that figure out how to combine their strengths with the capabilities that models like Claude bring to the table will be the ones that define what security looks like for the next decade.

For security professionals, the arrival of Claude Code Security is a complicated moment. It is a tool that could genuinely help them do their jobs better — finding vulnerabilities faster, reducing backlogs, and covering more code than any team could manage manually. It is also a signal that the definition of their job is about to change, perhaps significantly.

For the rest of us — users of software, customers of companies that run software, citizens of an economy built on software — the news is mostly good. More bugs found. More patches applied. Fewer vulnerabilities for attackers to exploit. The catch is that the same AI that helps defenders is available to attackers too. The race is on.

The selloff may have been the market's first reaction. Partnership announcements may well be the second.