The AI Security Confidence Paradox: Why 87% of Organisations Think They Are Ready — and 46% Are Wrong

Nine in ten organisations are actively pressuring their security teams to loosen identity controls to keep pace with AI-driven automation, and nearly one in five are applying what respondents described as strong pressure. That is the headline finding from ‘Delinea's 2026 Identity Security Report’, which surveyed 2,001 IT decision-makers actively using or piloting AI across the UK, US, Germany, France, Australia, Singapore, and India. The picture it paints is one of an industry outrunning its own guardrails.

"The pressure to move fast on AI is real, but identity governance has not kept pace, which exposes enterprises to significant risk," said Art Gilliland, CEO at Delinea. "As AI agents multiply across enterprise environments, these identities often have the least oversight. The organisations that will succeed in the AI era will be the ones that enforce real-time, contextual access across every human, machine, and agentic AI identity," he explained.

The Confidence Paradox

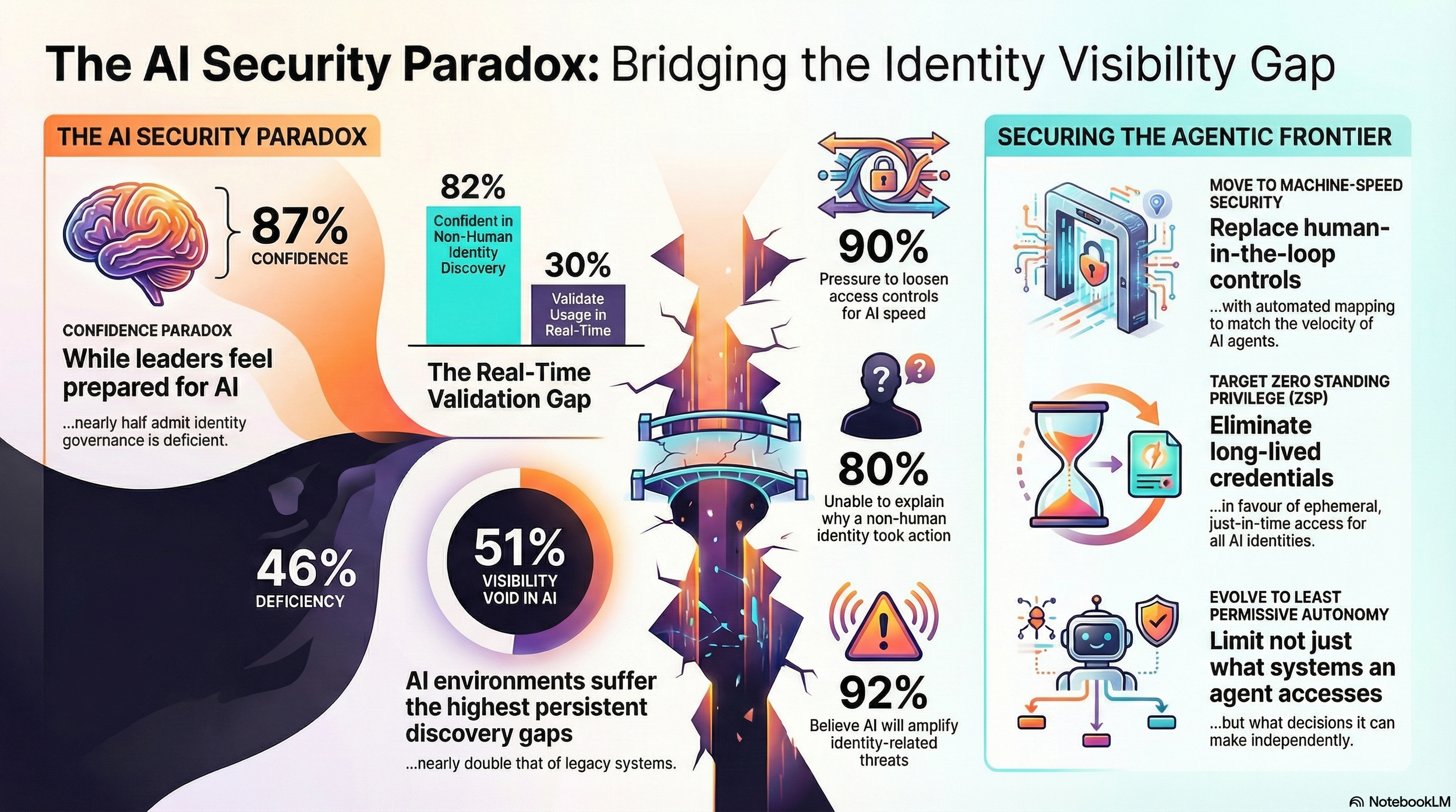

The report's most striking finding is a pervasive disconnect between how prepared organisations believe they are and what the data actually supports. While 87% of respondents said their identity security posture was ready to support AI-driven automation at scale, 46% of those same organisations admitted that their identity governance for AI systems was deficient. Respondents were twice as likely to give low marks to their ability to discover and govern identities in AI-related environments as compared with legacy systems.

The gap is particularly stark around non-human identities (NHIs) — the service accounts, bots, API connectors, and AI agents that now vastly outnumber human accounts in most enterprise environments. While 82% of respondents expressed confidence in their ability to discover NHIs with access to production systems, fewer than one in three organisations actually validate NHI and AI agent activity in real time. The confidence, in other words, is not backed by the mechanisms needed to sustain it.

The Visibility Gap

Across the board, 90% of respondents acknowledged at least some form of identity visibility gap within their organisation. The most persistent blind spots were in AI-related environments, where discovery gaps occurred at nearly double the rate of legacy or on-premises systems — with 51% of organisations reporting ongoing gaps in AI environments compared to 27% for legacy infrastructure. Machine and NHI accounts, including those operated by AI agents, were identified as the number-one source of visibility failures.

Some 42% of organisations said AI expansion had been one of the top factors increasing their NHI risk over the past 12 months — far ahead of increased automation and CI/CD velocity at 26%, and growth in cloud-native workloads, also at 26%. Meanwhile, 80% of organisations said they were unable to always understand why an NHI had taken a privileged action, a figure that speaks to the accountability vacuum forming around agentic systems.

Dr Gerald Auger, Head of Simply Cyber, articulated the broader structural problem. "The business is accepting AI risk to stay competitive, but because AI is such a new paradigm, they're accepting it without actually understanding qualitatively or quantitatively what the risk is," he said. "It's so new that CISOs can't yet convey 'here's the risk' in concrete terms. That's a gap on the GRC professional's side, too."

Speed Over Governance

The research found that when security requirements conflicted with business speed, fewer than one in three organisations said security controls were consistently enforced. Approximately 11% said controls were bypassed altogether through shadow use. A quarter reported that exceptions were granted on a case-by-case basis, and another quarter said controls were either temporarily disabled or standing privileges were granted — a distinction that security practitioners note rarely holds in practice.

Chris Hughes of Resilient Cyber drew a direct line to earlier technology transitions. "We keep saying we need to build security in, not bolt it on. But then, every new tech paradigm we give a security hall pass. People go out and do a bunch of innovative new greenfield projects, and security has to come in after the fact and try to harden it," he said. He added that the driver was understandable: "It's being driven by the fact that the business feels like they have to keep pace. Our competitors are doing this. If we don't, we're going to fall behind."

Kayla Williams, vCISO and SANS Institute's 2024 CISO of the Year, pointed to an even longer-running pattern. "We've gone through these technological advancements and transformation programmes time and time again, but we never seem to recognise the correlation. There's a common thread that pulls across all of them, which is identity," she said.

Shadow AI and the NHI Explosion

The proliferation of unsanctioned AI tools is significantly compounding the problem. Some 53% of respondents said they regularly encountered unsanctioned AI tools and agents accessing company systems or data — and those, notably, were only the instances being detected. Just 28% of organisations reported the ability to detect shadow AI activity in real time, while most said detection took hours or days. Gartner has estimated that by 2030, some 40% of organisations will suffer security incidents attributable to shadow AI risks.

Williams described the detection challenge in stark terms. "Agents behave exactly like compromised credentials: they're trusted, they're persistent, they're pretty much invisible once they're inside," she said.

The NHI problem predates agentic AI but has been dramatically accelerated by it. Analysts estimated two years ago that NHIs outnumbered human accounts 46 to 1; a year later, that estimate had nearly doubled to 82 to 1. Today, organisations are more than twice as likely to grant long-lived static credentials to NHIs and AI agents — the method used by 35% of respondents — than to deploy more modern just-in-time authorisation, used by just 17%. Only 8% use ephemeral access. One in ten organisations did not know how they were granting access to NHIs at all.

Hughes captured the structural risk. "The reason these tools are so vulnerable and potentially risky is that access gives them so much utility," he said. "Agentic AI does things we didn't anticipate because it's non-deterministic. And in many cases, it can be hard to even understand why it did something or why it asked for elevated privileges to do that action."

Identity as the Attack Surface

The governance gaps identified by the research are more significant given the current threat landscape. Some 92% of respondents believed AI would amplify identity-related threats over the coming years, with credential stuffing and password attacks — cited by 33% — and privileged account compromise — cited by 31% — leading their concerns. Gal Diskin, VP of Identity Threat Product and Research and head of Delinea Labs, described the shift in attack methodology: "These attacks started from stolen credentials and propagated by stealing more credentials. The whole goal is identity. The whole method of propagation is identity."

Williams put it more directly: "Attackers don't need to break in because we are legitimately handing them access." Diskin added a further caution: "Legitimate access does not mean safe access. A lot of organisations are confident they will detect something, but detecting after the fact is very different from controlling and preventing before the fact."

The Path Forward

The report's expert panel was clear that the solution was not to slow AI adoption but to evolve identity governance in step with it. The foundational imperative was visibility: organisations cannot govern what they cannot see. From there, the prescription involved moving toward just-in-time and ephemeral access models, applying zero-trust principles consistently to agent accounts, and eventually reaching a state of zero standing privilege — where no persistent admin rights exist for agents. For most organisations, that destination remains distant. Auger was characteristically pragmatic: "I'll count it as a win if we just have an inventory of all the identities that they have standing access to."

Hughes argued that the security community needed to resist fatalism and instead use the same AI tools to transform the threat landscape to modernise its own capabilities. "Security teams can adopt the same AI technologies and innovations to improve their own operations and move fast enough to work at the pace of agentic AI innovation," he said — turning the race, in principle, into something that security could run rather than merely chase.